Naveen Verma’s lab at Princeton College is sort of a museum of all of the methods engineers have tried to make AI ultra-efficient through the use of analog phenomena as an alternative of digital computing. At one bench lies probably the most energy-efficient magnetic-memory-based neural-network laptop ever made. At one other you’ll discover a resistive-memory-based chip that may compute the biggest matrix of numbers of any analog AI system but.

Neither has a business future, in line with Verma. Much less charitably, this a part of his lab is a graveyard.

Analog AI has captured chip architects’ creativeness for years. It combines two key ideas that ought to make machine studying massively much less vitality intensive. First, it limits the pricey motion of bits between reminiscence chips and processors. Second, as an alternative of the 1s and 0s of logic, it makes use of the physics of the stream of present to effectively do machine studying’s key computation.

As enticing as the thought has been, varied analog AI schemes haven’t delivered in a means that would actually take a chunk out of AI’s stupefying vitality urge for food. Verma would know. He’s tried all of them.

However when IEEE Spectrum visited a yr in the past, there was a chip behind Verma’s lab that represents some hope for analog AI and for the energy-efficient computing wanted to make AI helpful and ubiquitous. As a substitute of calculating with present, the chip sums up cost. It would appear to be an inconsequential distinction, nevertheless it may very well be the important thing to overcoming the noise that hinders each different analog AI scheme.

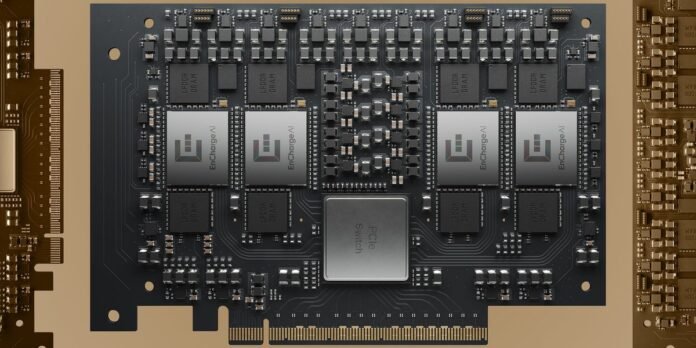

This week, Verma’s startup EnCharge AI unveiled the primary chip based mostly on this new structure, the EN100. The startup claims the chip tackles varied AI work with efficiency per watt as much as 20 occasions higher than competing chips. It’s designed right into a single processor card that provides 200 trillion operations per second at 8.25 watts, aimed toward conserving battery life in AI-capable laptops. On prime of that, a 4-chip, 1,000-trillion-operations-per-second card is focused for AI workstations.

Present and Coincidence

In machine studying, “it seems, by dumb luck, the primary operation we’re doing is matrix multiplies,” says Verma. That’s mainly taking an array of numbers, multiplying it by one other array, and including up the results of all these multiplications. Early on, engineers seen a coincidence: Two elementary guidelines of electrical engineering can do precisely that operation. Ohm’s Legislation says that you simply get present by multiplying voltage and conductance. And Kirchoff’s Present Legislation says that you probably have a bunch of currents coming into a degree from a bunch of wires, the sum of these currents is what leaves that time. So mainly, every of a bunch of enter voltages pushes present by way of a resistance (conductance is the inverse of resistance), multiplying the voltage worth, and all these currents add as much as produce a single worth. Math, carried out.

Sound good? Effectively, it will get higher. A lot of the info that makes up a neural community are the “weights,” the issues by which you multiply the enter. And transferring that knowledge from reminiscence right into a processor’s logic to do the work is accountable for a giant fraction of the vitality GPUs expend. As a substitute, in most analog AI schemes, the weights are saved in one in all a number of varieties of nonvolatile reminiscence as a conductance worth (the resistances above). As a result of weight knowledge is already the place it must be to do the computation, it doesn’t must be moved as a lot, saving a pile of vitality.

The mixture of free math and stationary knowledge guarantees calculations that want simply thousandths of a trillionth of joule of vitality. Sadly, that’s not almost what analog AI efforts have been delivering.

The Bother With Present

The basic downside with any form of analog computing has all the time been the signal-to-noise ratio. Analog AI has it by the truckload. The sign, on this case the sum of all these multiplications, tends to be overwhelmed by the various potential sources of noise.

“The issue is, semiconductor units are messy issues,” says Verma. Say you’ve bought an analog neural community the place the weights are saved as conductances in particular person RRAM cells. Such weight values are saved by setting a comparatively excessive voltage throughout the RRAM cell for an outlined time frame. The difficulty is, you possibly can set the very same voltage on two cells for a similar period of time, and people two cells would wind up with barely totally different conductance values. Worse nonetheless, these conductance values may change with temperature.

The variations is perhaps small, however recall that the operation is including up many multiplications, so the noise will get magnified. Worse, the ensuing present is then was a voltage that’s the enter of the subsequent layer of neural networks, a step that provides to the noise much more.

Researchers have attacked this downside from each a pc science perspective and a tool physics one. Within the hope of compensating for the noise, researchers have invented methods to bake some information of the bodily foibles of units into their neural community fashions. Others have targeted on making units that behave as predictably as potential. IBM, which has carried out intensive analysis on this space, does each.

Such methods are aggressive, if not but commercially profitable, in smaller-scale programs, chips meant to supply low-power machine studying to units on the edges of IoT networks. Early entrant Mythic AI has produced a couple of era of its analog AI chip, nevertheless it’s competing in a subject the place low-power digital chips are succeeding.

The EN100 card for PCs is a brand new analog AI chip structure.EnCharge AI

The EN100 card for PCs is a brand new analog AI chip structure.EnCharge AI

EnCharge’s resolution strips out the noise by measuring the quantity of cost as an alternative of stream of cost in machine studying’s multiply-and-accumulate mantra. In conventional analog AI, multiplication relies on the connection amongst voltage, conductance, and present. On this new scheme, it relies on the connection amongst voltage, capacitance, and cost—the place mainly, cost equals capacitance occasions voltage.

Why is that distinction essential? It comes all the way down to the part that’s doing the multiplication. As a substitute of utilizing some finicky, susceptible gadget like RRAM, EnCharge makes use of capacitors.

A capacitor is mainly two conductors sandwiching an insulator. A voltage distinction between the conductors causes cost to build up on one in all them. The factor that’s key about them for the aim of machine studying is that their worth, the capacitance, is decided by their dimension. (Extra conductor space or much less area between the conductors means extra capacitance.)

“The one factor they depend upon is geometry, mainly the area between wires,” Verma says. “And that’s the one factor you’ll be able to management very, very effectively in CMOS applied sciences.” EnCharge builds an array of exactly valued capacitors within the layers of copper interconnect above the silicon of its processors.

The info that makes up most of a neural community mannequin, the weights, are saved in an array of digital reminiscence cells, every linked to a capacitor. The info the neural community is analyzing is then multiplied by the load bits utilizing easy logic constructed into the cell, and the outcomes are saved as cost on the capacitors. Then the array switches right into a mode the place all the fees from the outcomes of multiplications accumulate and the result’s digitized.

Whereas the preliminary invention, which dates again to 2017, was a giant second for Verma’s lab, he says the fundamental idea is kind of previous. “It’s referred to as switched capacitor operation; it seems we’ve been doing it for many years,” he says. It’s used, for instance, in business high-precision analog-to-digital converters. “Our innovation was determining how you should use it in an structure that does in-memory computing.”

Competitors

Verma’s lab and EnCharge spent years proving that the know-how was programmable and scalable and co-optimizing it with an structure and software program stack that fits AI wants which might be vastly totally different than they have been in 2017. The ensuing merchandise are with early-access builders now, and the corporate—which just lately raised US $100 million from Samsung Enterprise, Foxconn, and others—plans one other spherical of early entry collaborations.

However EnCharge is coming into a aggressive subject, and among the many opponents is the massive kahuna, Nvidia. At its massive developer occasion in March, GTC, Nvidia introduced plans for a PC product constructed round its GB10 CPU-GPU mixture and workstation constructed across the upcoming GB300.

And there shall be loads of competitors within the low-power area EnCharge is after. A few of them even use a type of computing-in-memory. D-Matrix and Axelera, for instance, took a part of analog AI’s promise, embedding the reminiscence within the computing, however do the whole lot digitally. They every developed customized SRAM reminiscence cells that each retailer and multiply and do the summation operation digitally, as effectively. There’s even at the least one more-traditional analog AI startup within the combine, Sagence.

Verma is, unsurprisingly, optimistic. The brand new know-how “means superior, safe, and customized AI can run domestically, with out counting on cloud infrastructure,” he stated in a assertion. “We hope it will radically broaden what you are able to do with AI.”

From Your Web site Articles

Associated Articles Across the Internet